Your AI Is Moving at the Wrong Speed

Part of the series: AI at the Wrong Speed – Enterprise AI is already everywhere with announcements, pilots, dashboards, press releases everyday. Service providers are racing to close implementation contracts. Some CIOs, under pressure to demonstrate innovation to their boards, are spriting alongside. Speed is the metric, deployment timelines have shrunk, and POCs have multiplied exponentially.

But, if you have noticed, quietly, some reversals have begun among mature organisations.

Walmart recently pulled back from its AI-powered checkout initiative developed in collaboration with OpenAI. This programme, generated considerable attention when it launched, has been suspended while the company recalibrates its approach. This pull back represents capital spent, organisational energy consumed, and an implementation that fizzled when operational reality came to being.

If you look around, you will find that Walmart isn’t alone. Across sectors, organisations that moved fast on AI are discovering that speed of deployment and depth of adoption aren’t really the same.

The Provider Incentive Problem

To understand why this is happening at scale, it helps to understand the incentive structure around AI implementation.

Technology service providers are rewarded for deployment, and not for adoption. Their commercial models are around licensing arrangements, managed service, or an SI engagement. These contracts close when the solution goes live. What happens to organisational capability, process integration, or employee adoption after go-live is largely, and usually, outside the scope of the engagement.

This creates a structural pull toward speed. Faster deployment means faster revenue recognition for the provider. A CIO who has committed to the board that AI will be live in short duration, has every incentive to accept this framing.

This results in implementations that are technically complete and organisationally unsound.

The British Airways Lesson

We have seen this movie play before in the early in the digital transformation waves, and not learned much from it.

British Airways committed heavy investment to a large-scale digital transformation programme. The programme stalled somewhere in the middle, because the organisation was not ready to absorb what was coming off the production end. BA stepped back, spent considerable time and money recalibrating, and restarted. The cost of that cycle, in terms of capital destroyed, momentum lost, institutional confidence damaged, was significant and largely avoidable.

This and hundreds of other such failures should have taught the industries something. When an organisation hurtles into a transformation before it has built the organisational foundations to sustain it, the failure arrives slowly, expensively. And, in a way that makes the next attempt harder to justify internally. This is the exact opposite of a “Fail Fast”. Apart from the investment issues, these slow-burn failures leave behind a cultural scar that takes years to fix.

The Spike and Why It Collapses

Now, not as if every AI implementation fails visibly.

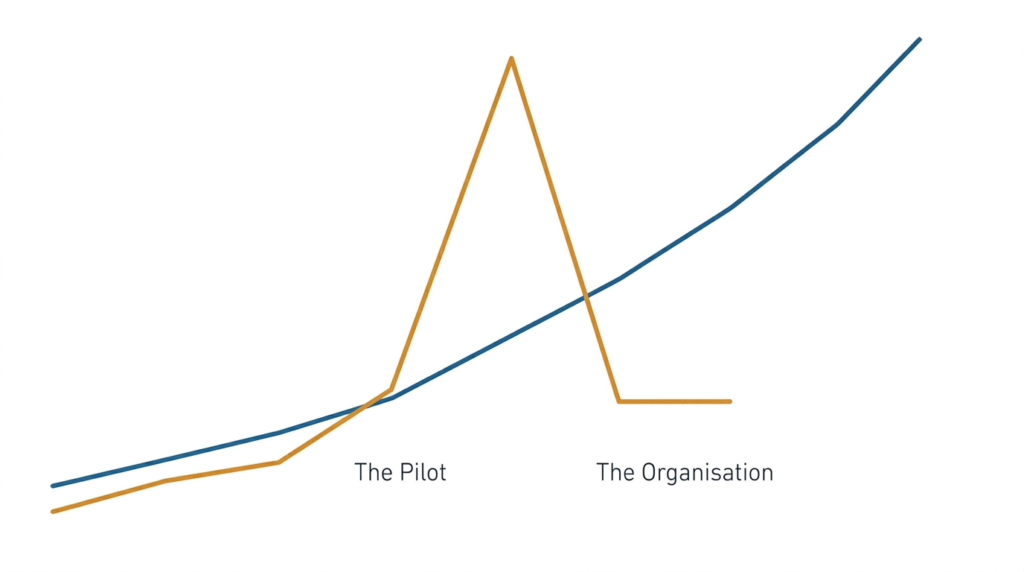

A spike is a sharp, short-lived performance gain: in today’s AI world, these could be a pilot that delivers impressive ML accuracy numbers, a chatbot that deflects thousands of support tickets in the first month, or, say, a computer vision model that catches defects at a rate beyond human capability. These, completely valid, results get presented to boards, written into case studies, are touted at conferences (sponsored by the service provider) and used to justify the next round of investment.

These spikes are, however, are conditions, and do not represent capabilities. They are produced by a focused team, a controlled environment, and a narrow use case that has been optimised for a show-and-tell. When the same solution goes out into the enterprise, across messy (or incomplete) data, legacy processes, tight security controls, and a workforce that was not part of the pilot, the numbers go haywire.

The Slope Framework, published on Substack, might provide a useful adaptable direction. The framework draws a distinction between the intercept, a snapshot of where something stands at a moment, versus the slope, which is the trajectory of improvement over time. Jim Collins, in his famous Good to Great, made a related argument: companies celebrated for dramatic performance at a point in time frequently failed to sustain it. The intercept always looked impressive, but the slope (the trajectory) did not hold.

Similarly, in AI, the spike is the intercept. And, an organisation that has not built the underlying foundations, clean data, redesigned processes, genuine workforce capability, has no slope to fall back on when the spike fades.

What Is Actually Being Skipped

Organisations that have just started seeing the burrs in their AI implementation, are seeing so because of their neglect of the unglamorous, non-headline work in order to rush to go live. This phase of work is neither fast nor visible. It looks, in the short term, and to the inexperienced, like delay. Boards and steering committees see costs accumulating without corresponding output. The temptation to accelerate past this phase, or skip it entirely, is precisely where most implementations go wrong.

The question for Part 2 of this series is not whether AI works. The question is what organisations are actually measuring when they decide it is working, and why those measurements are leading them to the wrong conclusions.

Next: Your AI ROI Numbers Are Telling You the Wrong Story

3nayan’s AIM FRAMEWORK

Readiness Assessment

Do a quick assessment of your organisation’s readiness for AI Implementation and Adoption. Five minutes, 30 questions, FREE. Enough to give you a directional view. Enough for you to consider a deeper check, and roadmap.