Your AI ROI Numbers Are Telling You the Wrong Story

Part of the series: AI at the Wrong Speed

What do you think is the usual answer to a board question of whether an AI implementation is working? Almost invariably, it is a number e.g. Cost savings, % Productivity improvement, Tickets deflected, Headcount avoided etc. But, these real and measurable numbers are absolutely the wrong things to look at.

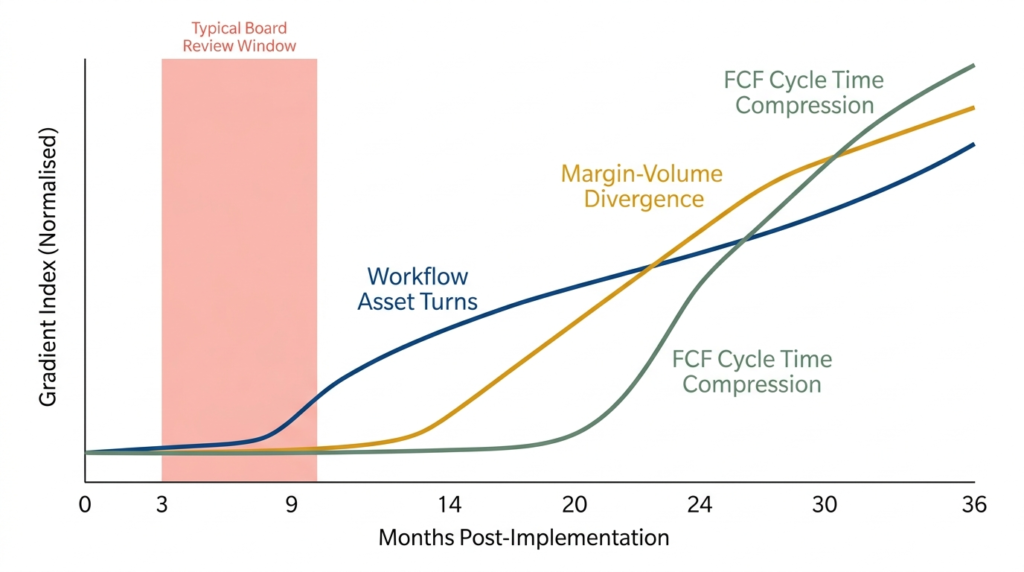

The problem with using ROI snapshots to evaluate AI implementation is that they measure the wrong moment, the wrong state of the system. A P&L impact figure tells you where the organisation stood at the point of measurement, making it a lagging indicator. It says nothing about whether the organisation is becoming more capable, more adaptive, or able to extract more value from AI over time. That is an intercept, not a slope.

And that slope is what determines outcomes in the longer term.

Why Boards Pull the Plug

Let us understanding why AI implementations are reviewed. This will tell us why it leads to AI failures.

Most AI pilots are evaluated in isolation. Usually, use cases are selected, solutions deployed, measurement periods are defined, and results produced. That result is then compared to the investment. If the ratio is unfavourable, or if the pilot has not produced a visible P&L impact within the expected temporal window, the programme is reviewed, scaled back, or stopped.

This evaluation model has several fundamental and structural problems. First, the pilot is rarely connected to a full process chain. An AI model that improves one step in a multi-step workflow produces a result that is real but not operationally significant. The overall process does not move faster or better. There isn’t a marked difference in customer data (c-sat, engagement, stickiness, conversion etc.). The cost saving isn’t enough to show up in accounts because it gets disguised by the surrounding process absorbs it.

Second, the measurement window is almost always too short. AI implementations that are genuinely transformative require an organisational learning curve. This could be data quality which improves over time or employee adoption deepens. Similarly, process redesign, which initially slows things down, eventually produces compounding efficiency. None of this is visible in a quarterly review.

Third, and the most consequential, the budget has usually been sized for the deployment, not for the full transformation cycle. When costs run ahead of visible returns, the progamme appears to be failing. In most genuine transformations, especially in the middle phases, the costs are front loaded. The CIO, and the boards measure the intercept and see costs accumulating without corresponding output. The “rational” response, is to stop the programme.

Jim Collins documented a version of this in Good to Great: companies celebrated for dramatic performance at a specific point in time frequently did not sustain it. Because of no substance in the trajectory, the momentary euphoria invariably fizzled. What most AI programme reviews are measuring is the intercept of the transformation, a snapshot. What they should be measuring is whether the slope is building.

The Three Gradients That Actually Matter

The below three financial gradients, instead of a vanialla P&L, give a more accurate picture of whether an AI implementation is institutionalising or merely experimenting.

Workflow asset turns. e.g. task automation rate multiplied by quality score is a better measure than drop is headcount. Is AI handling a growing proportion of defined tasks, and is it doing so with improving accuracy over time? A rising curve here indicates that the organisation is genuinely absorbing AI into its operating model, not just running a parallel pilot.

Margin-volume divergence. As AI takes over commodity tasks, human effort should shift toward higher-value work. The signal to track is AI-augmented output value per full-time equivalent compared to a pre-implementation baseline. If this ratio is improving, AI is shifting the organisation up the value curve. If it is flat or declining, the implementation is adding cost without repositioning capability.

Workflow cycle time compression. This is a decent proxy for working capital efficiency that most organisations overlook entirely. AI that is genuinely embedded in operational processes reduces throughput time, for instance, faster decision cycles, shorter processing windows, quicker exception handling etc. This compression reduces working capital friction in ways that do not immediately show up on a P&L but are significant at scale. Tracking cycle time across AI-enabled workflows, compared to baseline, reveals whether the implementation is hardening into the organisation’s operating fabric or sitting alongside it.

The Measurement Shift Required

None of these three gradients are complicated to track. What the boards really need to be asking is “is our AI capability compounding?”

That shift in measurement philosophy is, practically, a shift in governance. This needs:

- AI programme reviews to examine trend lines rather than point-in-time results.

- Investment cases that are built around capability development rather than cost recovery.

- Boards and steering committees that are “equipped” to read slope, not just intercept.

We believe, the organisations that are quietly building this measurement discipline now are the ones whose AI programmes will still be running, and accelerating, in three years. The ones pushing rapid deployment and reviewing quarterly P&L snapshots will be cycling through the same stop-start pattern that has characterised the last two years of enterprise AI adoption.

This is Part 2 of the series AI at the Wrong Speed.

Part 1: Your AI Implementation Speed Is Working Against You — examines why service providers, CIOs, and deployment timelines are structurally incentivised toward speed over readiness.

Part 3: Building the Slope: What AI Readiness Actually Looks Like — sets out the organisational readiness model and what slope-building looks like in practice.

3nayan’s AIM framework

Your FREE AI Readiness Assessment

Do a quick assessment of your organisation’s readiness for AI Implementation and Adoption. Five minutes, 30 questions, FREE. Enough to give you a directional view. Enough for you to consider a deeper check, and roadmap.