Building the Slope: What AI Readiness Actually Looks Like

Part of the series: AI at the Wrong Speed

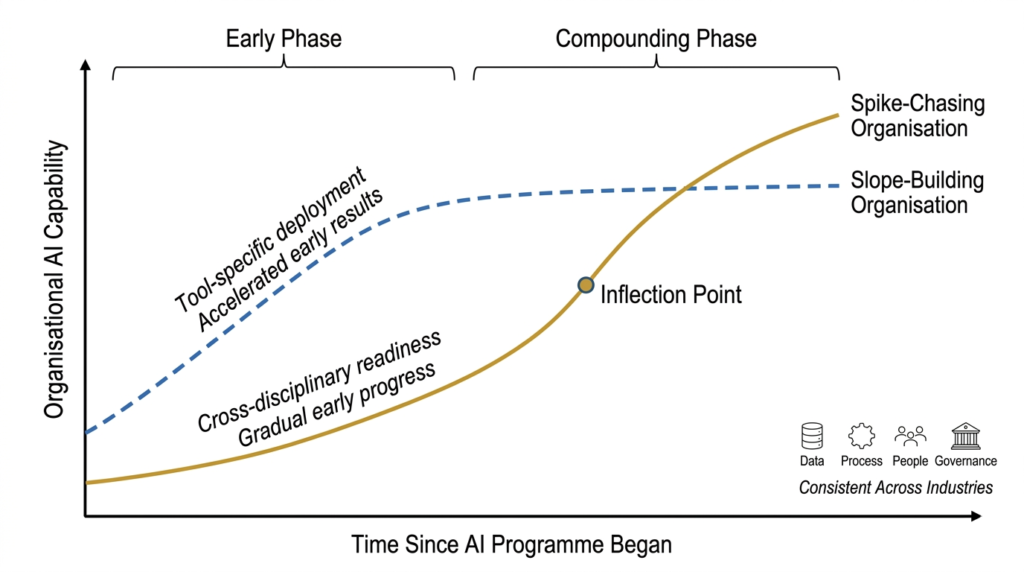

The organisations that will define enterprise AI over the next decade aren’t necessarily the ones with the largest implementation budgets or the most aggressive deployment timelines. They are the ones that are, right now, doing work that is largely invisible: cleaning data, redesigning processes, building workforce capability, and establishing governance structures that will compound over time. Never mind the service providers which are pushing speed of deployment.

This is what building the slope (or the trajectory, if you will) looks like. It is unglamorous, it is slow to show results, and it is precisely what most organisations are skipping.

What Current Readiness Assessments Get Wrong

There are many AI readiness assessments available, including those from the MBBs, and the Big5s. And what, most of these tools share is a common flaw: they measure adoption rather than velocity.

An adoption-based assessment asks whether an organisation has deployed AI tools, whether employees are using them, and whether governance policies exist. These are all static measures. They capture the intercept, a state of the organisation, and neglect the rate at which organisational AI capability is developing.

A velocity-based assessment asks different questions. Are data quality scores improving month on month? Are process owners redesigning workflows around AI constraints and capabilities, or working around them? Is the organisation’s ability to formulate AI-solvable problems getting sharper over time? These are slope or trajectory measures. They reveal whether the organisation is learning, not just deploying. In contrast, the current MLOps accuracy of the AI, or the immediate P&L impact of the process step (due to the AI adoption) are examples of static measures.

The distinction is pertinent, because an organisation with modest current AI adoption but strong learning velocity will, invariably, outperform an organisation with high adoption and flat velocity within 18 to 24 months. Current assessments are impervious to this, and are, in essence, looking at lagging indicators.

The Three Dimensions of Real Readiness

Organisational AI readiness has three distinct dimensions (among a few others), and weakness in any one of them limits what the other two can achieve.

Data Readiness being the foundation, AI systems perform to the quality of the data they consume. Organisations that have not addressed data governance, pipeline integrity, and master data consistency before deployment will find that their AI outputs are unreliable, their models degrade over time, and their confidence in AI-driven decisions erodes. Data readiness is, of course, a continuous operational discipline.

Process Readiness is where most implementations break down. The majority of AI deployments are bolt-ons, on existing processes. In most cases process re-design is skipped. A process, currently faulty or not, that was designed for human execution, with its associated handoffs, exception handling, and approval layers, can’t perform well with AI inserted at one point in the chain. Process readiness requires asking which processes need to be fundamentally redesigned / reimagined, not just automated, before AI can add durable value.

People Readiness, perhaps the most important, is the most consistently underestimated dimension. This is part of the Organisational Change Management. And this doesn’t refer to employee training, communications, marketing the program or posters on the walls. People readiness is developing the organisational capacity to work with AI, consistently and iteratively to:

- Formulate problems in ways AI can address,

- Interpret AI outputs critically,

- Identify where AI is adding error rather than removing it, and

- Continuously improve how human and AI capability interact.

This takes time, deliberate practice, and a workforce development strategy that most organisations have not yet written. It also should shape hiring decisions. Organisations selecting for transformations tend toward one of two patterns: intercept hires, chosen for familiarity with today’s specific tools, and slope hires, chosen for learning velocity and cross-disciplinary adaptability. Over a two to three year horizon, a team built for slope will consistently outperform a team built for intercept. The reason is the same, that extensive multi-disciplinary development produces superlative performance across fields, while intensive discipline-specific practice reaches plateaus.

What Slope-Building May Look Like in Practice

History teaches us, that slope-building organisations share several observable characteristics. They run smaller, deeper transformations (AI implementations in the current context) rather than broad shallow ones. Meaning, prioritising full process chain integration over pilot proliferation. They measure the three gradients outlined in Part 2 of this series (link below) rather than quarterly ROI snapshots. They have a workforce development programme that runs continuously alongside technical deployment, not as a one-time onboarding exercise.

Critically, they treat the Ugly Middle, the phase where costs are rising, productivity is temporarily disrupted, and resistance is building, as evidence that the transformation is real, not as a signal to stop. Every significant organisational transformation has this phase. The long-term players, the slope-builders plan for it. The ones who look for brilliant positive spikes (which turn out to be anomalies in time) are surprised by it and retreat.

Slope Always Wins

The race in enterprise AI is not between organisations that have implemented and those that have not. It is between organisations that are building compounding capability and those that are accumulating expensive, disconnected pilots.

The former will not have the most impressive 2026 or 2027 AI announcements. They will have the most durable AI-driven competitive advantage in 2028 and beyond. The math on compounding trajectories has never been complicated. Slope always wins.

This is Part 3 of the series AI at the Wrong Speed.

Part 1: Your AI Implementation Speed Is Working Against You — examines why the incentive structure around AI deployment is producing fast implementations and fragile outcomes.

Part 2: Your AI ROI Numbers Are Telling You the Wrong Story — sets out why P&L snapshots mislead and what financial gradients to track instead.