Why Enterprise AI Readiness Determines Scaling Success

The gap between initial AI investment and actual business outcomes is widening for most firms. While ~88% of organizations have deployed AI in some capacity, only a small fraction achieve a measurable impact on their bottom line. People have been mentioning this for the last two years, but the situation hasn’t altered. The 2025 McKinsey Global AI Survey indicates that only 5.5% of organizations see significant EBIT impact from their initiatives. This disparity continues because organizations often focus on deployment speed rather than enterprise AI readiness. When a program lacks the necessary structural foundations, it produces localized performance spikes that wither away during broader rollout.

The Failure of the Bolt-On Strategy

Most organizations treat AI as an addition to existing workflows. Large consulting firms often ignore this transition as a cultural or emotional response, but the primary barriers are structural and technical. This “bolt-on” strategy assumes that current processes can absorb new technology without significant modification. This approach mirrors the early errors of digital transformation where new tools were layered over antiquated systems.

High-performing organizations are 3x more likely to fundamentally redesign their workflows around AI rather than simply adding tools to existing ones. Achieving enterprise AI readiness needs moving beyond simple tool implementation to a complete reimagining of how work should be executed. Recent industry analysis in this State of AI Report 2025 video highlights the specific factors that separate these high performers from the majority.

Critiquing the “Emotional Side” Narrative

There is a prevailing narrative among the Big 5 consulting firms that AI failure is primarily a “change management” or “emotional” problem. This perspective is frequently used to justify long, expensive advisory engagements focused on cultural alignment. In reality, this is often a distraction from the mechanical failures of the implementation itself.

The resistance seen in workforces is rarely emotional, it is usually a rational response to broken process logic. When a generative AI tool is “bolted on” to a manual process, it often increases the cognitive load on the employee rather than reducing it. Workers are forced to verify outputs, manage new exception queues, and navigate fragmented data. If the structural enterprise AI readiness is absent, no amount of “emotional alignment” will make the implementation successful.

Three Essential Dimensions of Preparation

To ensure an AI program compounds in value, leadership must address three specific readiness dimensions. Weakness in any single area restricts the potential of the others.

1. Data Integrity and ‘Consumability’ : Successful AI requires data specifically prepared for consumption, which is different from standard reporting data. Standard enterprise data is often siloed, inconsistent, and formatted for human review. Gartner predicts that 60% of AI projects unsupported by AI-ready data will be abandoned through 2026. Data readiness involves moving from “passive storage” to “active context” where data is structured for LLM retrieval and reasoning.

2. Process Architecture Redesign: Processes must be redesigned to handle the specific requirements of AI, such as automated exception handling and new approval layers. A process that was designed for human-to-human handoffs will inevitably fail when an AI agent is introduced as a middle step. The architecture must be “AI-native,” meaning the workflow assumes AI will perform the first 80% of the task, with humans acting as final validators or exception handlers.

3. Capability Transfer over Tool Training Most firms stop at “tool training,” teaching employees which buttons to click. True readiness involves capability transfer, which is the organizational capacity to formulate problems that AI can solve and to interpret outputs with critical skepticism. This is a technical and analytical skill set, not a behavioral one.

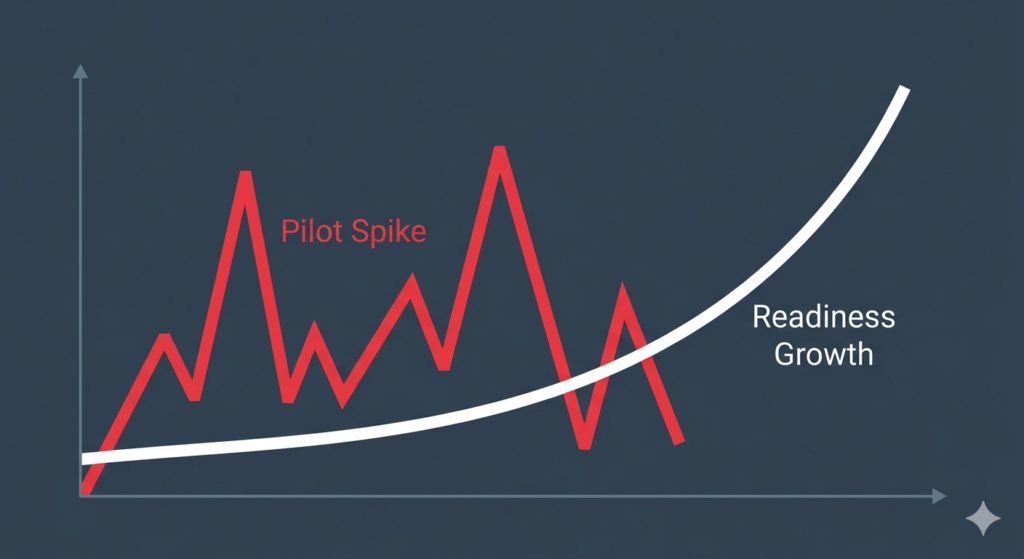

The Measurement Problem: Spikes vs. Slopes

A primary reason for pilot failure is the reliance on point-in-time ROI. Traditional consulting models focus on “spikes,” which are immediate, localized efficiency gains. These spikes are deceptive because they often rely on “clean room” conditions that do not exist at scale.

A “slope-builder” organization focuses on establishing cross-disciplinary foundations first. Estimates from MIT suggest that up to 95% of GenAI pilots fail to reach production because they cannot maintain the initial “spike” when moved into the messy reality of enterprise operations. By prioritizing enterprise AI readiness, firms focus on the “slope,” which is the rate at which the organization improves its ability to deploy AI across different functions.

The Velocity Standard for Scaling

The most durable indicator of success is not how many tools are live today, but the learning velocity of the organization. Eventually, foundational investments in data, process, and capability reach an inflection point. At this stage, the organization experiences exponential growth in value.

The early years of digital transformation taught us that speed without readiness leads to technical debt. The AI era is no different. Organizations must resist the pressure from implementors to “go live” before the structural work is complete. Prioritizing enterprise AI readiness over immediate tool adoption ensures that the program achieves long-term compounding value rather than a premature plateau.

Try this simple Readiness Assessment as a start. This assessment will tell you enough in terms of large gaps which might arise from readiness, implementation plans, measurement mechanisms or even the level of organisational maturity.